Trading card games (TCG’s) are not like other games of skill (like chess, for example). In games like chess, all competitors use the same game pieces. There is no customizing (or randomizing) aspect to them.

TCG’s, of course, require competitors to craft their own decks of cards (or play in a preconstructed, or draft format, which still requires a asymmetrical construction of decks between participants). This asymmetry in game pieces leads to differing contributions of those game pieces’ effects on competitors’ win probabilities.

Put another way, two competitors pitted in a TCG match against one another not only have differing skill in the game, but different decks.

How much does a TCG competitor’s deck contribute to his or her win probability?

This discussion is a partial reproduction of this topic from my paper An Outline of a Basic Sportsbook Model Using Microsoft Excel, R, and Bayesian Inference.

In this blog post, we take a closer look at this concept, as well as several alternate forms of rating “deck strength” that were rejected from the aforementioned white paper.

A Few Notes on Terminology

Throughout this post, we use the Glicko-2 skill rating system, a Bayesian-style update to the traditional Elo System used in chess and other competitive games.

This system uses skill ratings as described by a Gaussian distribution. Thus, Glicko-2 skill ratings have both ratings and rating deviations.

Throughout this discussion, we will refer to the concept of “deck strength”, denoted by the variable Γ (“gamma”).

Competitor “Performance”

When competitors play a TCG, they play a zero sum game. This means that, each competitor seeks a mutually exclusive victory against the other (i.e., only one competitor will win, and the other will lose; or they will both tie).

In mathematical terms, we say that “performance”, 𝒫, is calculated as:

This is to say that a competitor, call him or her Competitori using Decka against Competitorj using Deckb in Matchk, will have a performance result that is a comparison of his or her skill, plus an offset based on the matchup of Decks a and b, against Competitorj‘s skill. (The term ε is an error term attributable to “luck”, which we don’t—can’t—really control for.)

When 𝒫=1, it’s a win for Competitori and a loss for Competitorj, when 𝒫=0, it’s a loss for Competitori and a win for Competitorj, and when 𝒫=0.5 it’s a tie for both participants.

In formal terms, we now wish to understand how much to offset Competitori‘s performance based on the conditional matchup between Decks a and b (the Decka|b term in our performance equation).

Concept of “Deck Strength”

We call this concept—the concept that we will offset Competitori‘s “performance” relative to Competitorj based on the matchup of Decks a and b—”deck strength”.

How much stronger is Deck a against Deck b, and consequently, how much does it influence the performance of the two competitors?

Thought Experiment

Consider the following through experiment:

Two competitors, call them Competitori and Competitorj, face one another in a match. Both competitors have identical skill ratings and ratings deviations. The win probability of Competitori against Competitorj is 0.5000, that is, P(i|j) = 0.5000. The only difference between these two competitors will be the decks that they use.

Suppose Competitori uses Decka and Competitorj uses Deckb. Also suppose that, given our records, Decka beats Deckb with 0.6100 probability, that is, P(a|b) = 0.6100. This then holds that Competitori using Decka against Competitorj using Deckb has a win probability of 0.6100, that is, P(i,a | j,b) = 0.6100.

Again, with two dead even skill ratings and rating deviations, the only decisive factor influencing win probability is the win probability between the two decks in use.

Deck Strength as Skill Rating Offset

We conceptualize this deck strength factor as an offset to the competitors’ Glicko-2 skill ratings (this same concept might be applied to other numerical skill rating systems, with some adjustment).

This means that, if Decka is “stronger” than Deckb, we will give the user of Decka more skill rating points because of it. If, however, Deckb is “stronger” than Decka, we will rive the user of Deckb more skill rating points (or deduct skill rating points from the user of the “weaker” deck, which is the same thing).

As outlined in our thought experiment, above, the amount of offset, which we will call Γ, is based on the conditional probability of the two decks in use. That is to say, we take the probability that Decka beat Deckb, ceteris paribus, and use this as the basis of our offset in skill rating.

Skill Rating Offset in Glicko-2

In a match such as Match 1 on Table 1, we do not offset either competitor’s Glicko-2 skill rating: the matchups are dead even. However, as shown in Matches 2 through 9 on Table 1, different deck matchups result in offsetting the competitors’ skill ratings by a certain amount.

| Table 1. Nine Sample Matches Between Competitors and the Skill Rating Offset for Each. | ||||||

| Match | Skilli, RDi | Skillj, RDj | P(a|b) | Γ | P(i|j) | P(i,a|j,b) |

| 1 | 1500, 350 | 1500, 350 | 0.50 | 0 | 0.5000 | 0.5000 |

| 2 | 1500, 350 | 2000, 350 | 0.50 | 0 | 0.1272 | 0.1272 |

| 3 | 2000, 350 | 1500, 350 | 0.50 | 0 | 0.8728 | 0.8728 |

| 4 | 1500, 350 | 1500, 350 | 0.61 | 130 | 0.5000 | 0.6226 |

| 5 | 1500, 350 | 2000, 350 | 0.61 | 130 | 0.1272 | 0.1939 |

| 6 | 2000, 350 | 1500, 350 | 0.61 | 130 | 0.8728 | 0.9188 |

| 7 | 1500, 350 | 1500, 350 | 0.39 | -130 | 0.5000 | 0.3774 |

| 8 | 1500, 350 | 2000, 350 | 0.39 | -130 | 0.1272 | 0.8012 |

| 9 | 2000, 350 | 1500, 350 | 0.39 | -130 | 0.8728 | 0.8061 |

On Table 1, we list the skill rating and rating deviation (RD) for each competitor, i and j, as well as the win probability of Decka against Deckb, P(a|b), the unadjusted win probability of Competitori against Competitorj without taking decks into account, P(i|j), and the adjusted win probability of Competitori using Decka against Competitorj using Deckb, P(i,a|j,b). The RD for both competitors in each sample match is kept at 350 (the maximum allowable by the Glicko-2 system), which represents for us in these examples an environment of maximum uncertainty.

The term “Γ” on Table 1 is the adjustment term. This is the amount that Competitori’s skill rating is adjusted to reflect the probability of Decka against Deckb in a match against Competitorj. Thus, a positive Γ adds to Competitori’s Glicko-2 skill rating, and consequently, Competitori’s win probability, and a negative Γ subtracts from Competitori’s skill rating, and consequently subtracts from his or her win probability. The Γ term is temporary and only used to adjust for the influence of deck strength on a conditional matchup of two competitors in each match.

In the Glicko-2 system, the amount of skill rating offset is described by the following equation:

Where P(a|b) is the win probability of Decka conditional against Deckb, ceteris paribus.

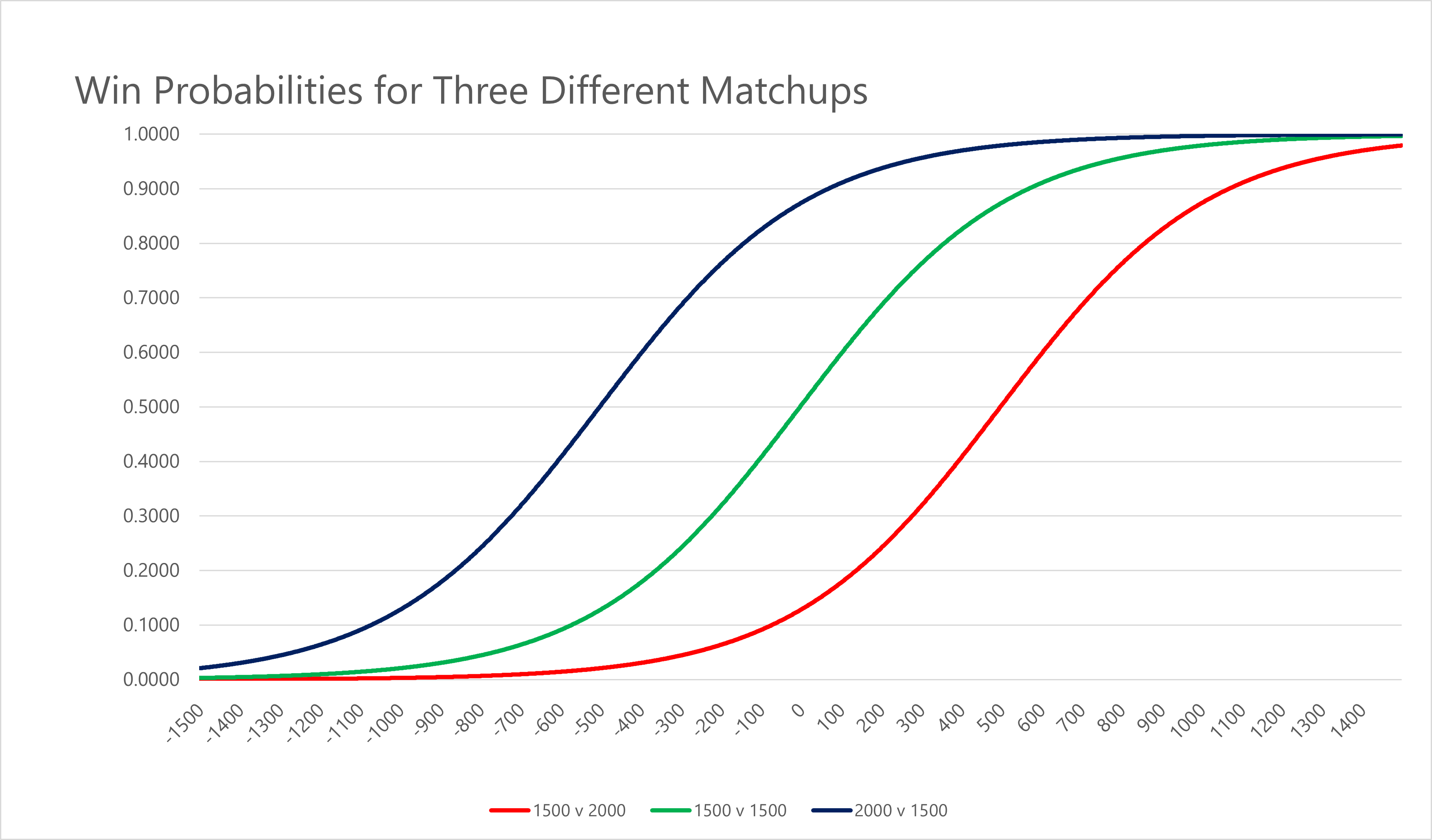

To visualize this, let’s pit two evenly-skilled competitors, call them Competitori and Competitorj, against one another. We plot two identically rated competitors in matches with differing Γ, ranging from -1500 to +1500 and show the win probability for Competitori. What we see if the chart below:

The sharpest changes in win probability happen at or near The sharpest changes in win probability happen at or near Γ = 0, when decks are evenly matched. This means that, theoretically, at or around P(i|j) = 0.5000, P(a|b) is decisive, because the competitors are otherwise evenly matched. At the upper or lower bounds of the curve, changes in P(a|b) are less decisive.

This makes intuitive sense because skill should be the controlling factor.

At very low levels of skill, the choice of decks should mean little as the competitor isn’t skilled enough to make full use of the available game tools, while at higher levels of skill, a competitor should perform well relative to weaker competitors no matter the choice of deck (in other words, that the choice of deck accounts for increasingly less of the higher-skilled competitor’s performance, the larger the gap in skill). = 0, when decks are evenly matched.

This means that, theoretically, at or around P(i|j) = 0.5000, P(a|b) is decisive, because the competitors are otherwise evenly matched. At the upper or lower bounds of the curve, changes in P(a|b) are less decisive.

This makes intuitive sense because skill should be the controlling factor. At very low levels of skill, the choice of decks should mean little as the competitor isn’t skilled enough to make full use of the available game tools, while at higher levels of skill, a competitor should perform well relative to weaker competitors no matter the choice of deck (in other words, that the choice of deck accounts for increasingly less of the higher-skilled competitor’s performance, the larger the gap in skill).

We can visualize the change in Γ for several different matchups as follows:

Sharper changes occur for competitors that are lowered skilled against higher skilled competitors (e.g., Glicko-2 skill rating 1500 v 2000) that they do for competitors that are higher skilled against lower skilled ones (e.g., Glicko-2 skill rating 2000 v 1500).

Let’s take a look at some examples of deck strength’s impact on competitor win probabilities.

Example of Deck Strength Impact

Consider the best competitor in a game, called Competitorm, using the worst deck in the game, called Deckx, in match against the worst competitor in the game, called Competitorn, using the best deck in the game, called Decky. The skill gap is so great that m has never lost a match and has a Glicko-2 rating of 3,000 and n just learned the rules of the game five minutes before sitting down to play.

How much does the deck of either competitor matter in this case?

Consider the results demonstrated on this table:

| Table 2. Illustration of Deck Strength for Best and Worst Competitors in a Game. | ||||

| Scenario | State | Γ | Probability | Change* |

| 1 | P(n,x|m,y) | -638 | 0.0003 | -99.14% |

| 2 | P(n,y|m,x) | +638 | 0.0349 | +11,533% |

| 3 | P(m,x|n,y) | -638 | 0.9651 | -3.46% |

| 4 | P(m,y|n,x) | +638 | 0.9997 | +3.59% |

| *The “Change” measures the percentage change in probability between corresponding scenarios. Thus, the Change shown on this table compares the change in probability between Scenarios 1 and 2 and between Scenarios 3 and 4, respectively. | ||||

In each case illustrated by Table 2, Competitorm has a skill rating of 3,000 and rating deviation (RD) of 350 and Competitorn has a skill rating of 1,500 and RD of 350. The deck matchup is so lopsided that P(x|y) is just 0.1000 (and conversely, P(y|x) is 0.9000). This means that, ceteris paribus, Deckx beats Decky with only 0.1000 probability.

The scenarios in Table 2 clearly illustrate that skill is the controlling factor.

While the choice of deck increases n’s win probability over eleven times from x to y, even this much help from deck choice does little when compared to m’s superior skill. Likewise, even if m has the worst deck in the game, he or she has the overwhelming probability of winning.

Only at close levels of skill rating between competitors, as previously illustrated in the examples in Table 1 and the logistic curve described in Figure 1, should deck choice be the decisive factor.

Deck choice does have an influence, and its effects are on a gradient somewhere between the extreme examples of Scenario 1 and Scenario 4 on Table 2.

Putting it All Together

We conceptualize a TCG deck’s contribution to a competitor’s performance as a skill rating offset.

When we compare Competitori using Decka to Competitorj using Deckb, we describe the probability of the outcome as:

This is the joint probability of each combination of competitor and deck conditional on one another.

We describe the amount of offset, Γ, in competitor skill rating computed as the transformed win probability of Decka conditional on Deckb. This preserves the concept that competitors at higher levels of skill rely less on the choice of deck when playing against competitors of lower skill, and vice versa. The best player in the game will beat a newbie, hands down, even if the newbie has the better deck.

In matchups where skill is more evenly matched between competitors, deck strength becomes decisive, as closer and closer matchups in skill and deviation means that deck strength becomes more and more the controlling factor in determining win probability.

Leave a Reply